Practical quantum computing has been big news this year, with significant advances being made on theoretical and technical frontiers. But one big stumbling block has remained – melding the delicate quantum landscape with the more familiar digital one. This new microprocessor design just might be the solution we need. This is big.

Researchers from the

University of New South Wales (UNSW) have come up with a new kind of

architecture that uses standard semiconductors common to modern processors to

perform quantum calculations. Details aside, it basically means the power of

quantum computing can be unlocked using the same kinds of technology that forms

the foundation of desktop computers and smart phones.

"We often think of

landing on the Moon as humanity's greatest technological marvel," says

designer Andrew Dzurak, director of the Australian National Fabrication

Facility at UNSW. "But creating a microprocessor chip with a billion

operating devices integrated together to work like a symphony – that you can

carry in your pocket – is an astounding technical achievement, and one that's

revolutionised modern life."

Whether or not you agree

that such an achievement would rival space travel, the step is a giant leap for

computing.

"With quantum

computing, we are on the verge of another technological leap that could be as

deep and transformative. But a complete engineering design to realise this on a

single chip has been elusive," says Dzurak.

Quantum computing makes

use of an odd quirk of reality – particles exist in a fog of possibility until

they're connected to a system that defines their properties. This fog of possibility

has mathematical characteristics that are immensely useful, if you know how to

tap into them.

While traditional

computing is binary, representing the Universe as one of two symbols such as 1s

and 0s, quantum computing allows a layer of complexity to be represented by that

spectrum of probabilities. The problem is that this quantum fog, also called a

qubit, is delicate. The whole act of 'measuring' isn't a strict affair, meaning

the particle can coalesce into reality – or collapse, to use the jargon –

accidentally.

Since hundreds, if not

many hundreds of thousands, of qubits are needed to make the whole thing

worthwhile, there's plenty of room for unwanted collapses. To help ensure

unstable qubits don't introduce too many errors, they need to be arranged in

such a way to make them more robust.

"So we need to use

error-correcting codes which employ multiple qubits to store a single piece of

data," says Dzurak. "Our chip blueprint incorporates a new type of

error-correcting code designed specifically for spin qubits, and involves a

sophisticated protocol of operations across the millions of qubits."

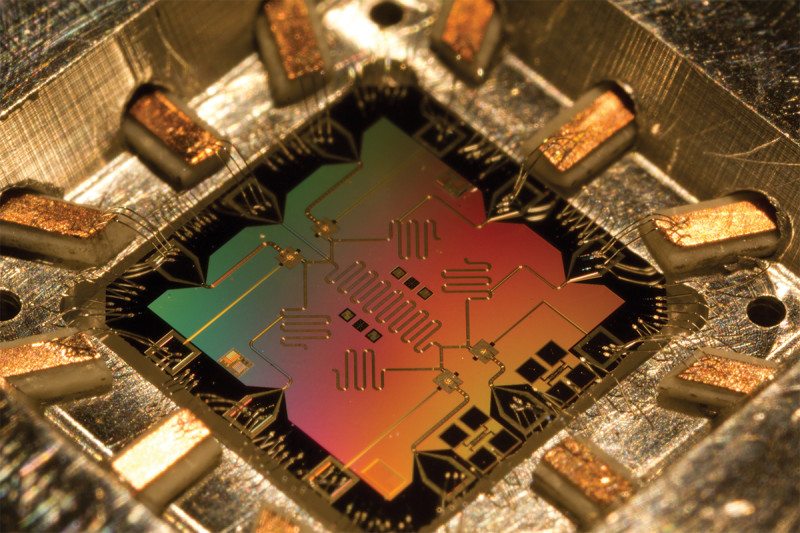

This technology is the

first attempt to put all of the conventional silicon circuitry needed to

control and read the millions of qubits needed for quantum computing onto one

chip. In simple terms, conventional silicon transistors are used to control a

flat grid of qubits in much the same way logic gates manage bits inside your

desktop's processors.

"By selecting

electrodes above a qubit, we can control a qubit's spin, which stores the

quantum binary code of a 0 or 1," explains lead author of the study, Menno

Veldhorst, who conducted the research while at UNSW. "And by selecting

electrodes between the qubits, two-qubit logic interactions, or calculations,

can be performed between qubits."

We're still some way off

combining these advances into solid pieces of technology. Even once we have the

first machines capable of modelling molecules in super high detail, or

crunching the stats on climate change on an unprecedented scale, we will need

coders who know how to make use of qubits.

Microsoft has its eye on

that eventuality, recently releasing a free preview of its new quantum

development kit for tomorrow's programmers keen for a head start.

It's happening. One by

one, the technological hurdles are falling. Interested in knowing more? The

clip below has a neat explanation:

Via Sciencealert